CSGO Neural Network Aimbot

A Counter-Strike: Global Offensive, neural network aimbot utilizing OpenCV & OpenVINO on the Intel Neural Compute Stick 2.0

On the 13th of January 2019, I developed DetectionAim, a closed-source private neural network aimbot for the game Counter-Strike: Global Offensive, utilizing object detection & OpenCV for autonomous aiming at enemies with minimal anti-cheat detection vectors.

This project was done to explore the rapidly expanding field of artificial intelligence as well as explore the newly developed and constantly innovative field of dedicated hardware processors specialized for artificial intelligence applications, specifically the Intel Neural Compute Stick 2.0.

Whilst there have been similar projects in the past, achieving exceptional results with widespread accuracy. All of these have required the heavy use of high-performance GPUs such as the Nvidia GTX 1080 to achieve object-detection speeds exceeding 60 FPS at 1920 x 1080p. This as a result, has restricted the applications of these hack vectors to users with only high-end computers which has limited the widespread adoption of this method as it is limiting both in audience and practicality.

The goal of this project was to attempt to circumvent these restrictions by instead opting to use the neural compute stick, by instead removing the need of a dedicated GPU for processing whilst still aiming for high object-detection accuracies, speed and performance we could increase the practicality and usability of these aimbots in a new innovative way. Through eliminating the necessity of requiring a beefy computers the users could instead opt to have a secondary external system or "Black Box" which they could plug into their setup and achieve quick & accurate enemy detection and termination.

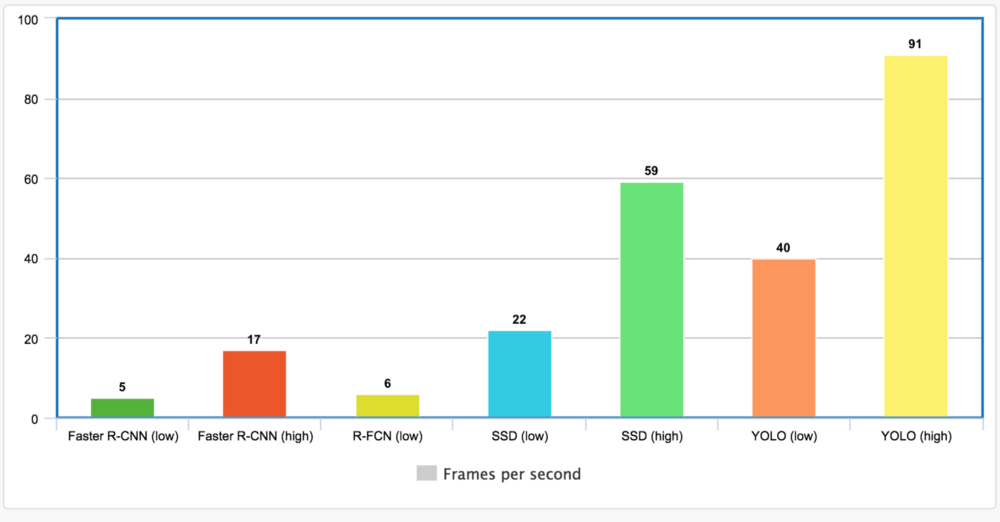

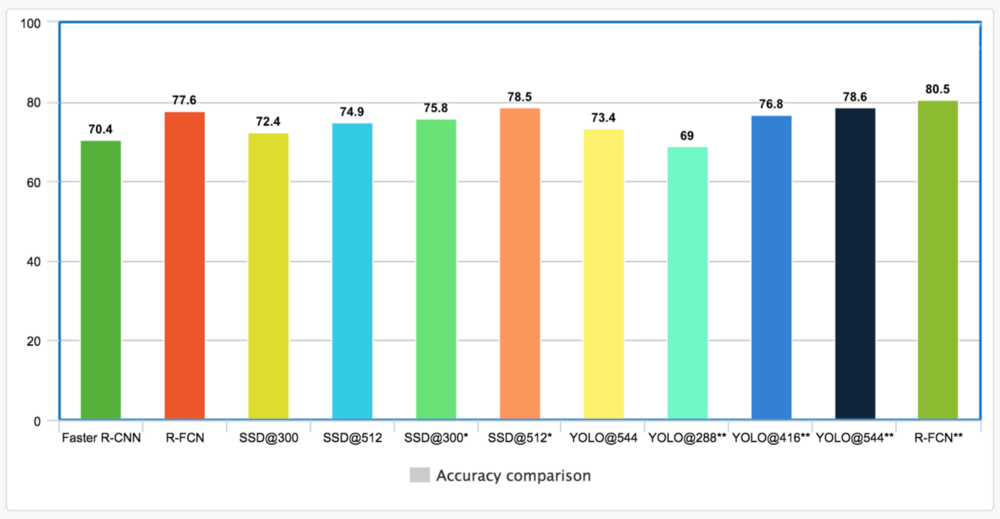

To achieve this, we needed a pre-existing, well known, lightweight, yet reliable and accurate object-detection model to retrain. After looking at multiple neural network models and benchmarking them on a GPU setup we were given the following options:

After comparing both speed & accuracy, then additionally removing detection models that weren't compatible for the Movidius neural compute stick and finally benchmarking each model on the OpenVINO framework. I was left with two main options; YoloV3 and MobileNetSSD.

Whilst YoloV3 was incredibly fast in terms of object detection it fell behind in detection accuracy and had many false positives increasing proportionally as the range increased. Whilst alternatively MobileNetSSD was slower but had high accuracies at increased range.

After comparing the two, I concluded, to utilize the MobileNetSSD model, however through tweaking my screen capturing method to reduce the amount of work needed to process as well as streamline the process, implementing multi-threading & pipelines.

To do this, I firstly optimized the software as a whole and implemented multi-threading to better increase overall speed and better analyze areas in the program where it slowed down. Through the implementation of three main pipelines where one grabs the image, one processes it and one outputs it I was able to increase the performance of the model by about 35%. From this I was additionally able to better analyze the software as a whole and profile areas of the application where bottlenecks occurred.

After the fact, I discovered two main bottlenecks; the processing and more surprisingly the capturing of the program.

At the start I was using the python library, `pillow` to capture periodic screenshots of the application to later be processed, this surprisingly was a slow and inefficient process significantly slowing down the application. As a result, the decision was made to change libraries to the `mss` library which gave significant performance benefits.

Also, the object detection model as a whole in the pipeline was lagging in comparison to the rest of the program. To solve this solution, I opted to lower the overall framerate given into the program, where we checked the difference of the program every 2 frames rather than checking each frame, as the change in enemy positions was negligible for a performance to accuracy tradeoff. Additionally, I opted to do further processing on the image obtained, I downscaled the resolution from 1920x1080 to 480x270 with it additionally being converted from RGB to BGR for additional assistance.

Through a mixture of these techniques we were able to increase the performance from around 15 frames to up to 75-90 frames on an i7 8 GB Surface Pro 4, with no graphics card only utilizing the Intel Movidius Neural Compute 2.0 stick.

Overall to conclude, I'd say this project was an immense success, whilst I don't believe artificial intelligence is the most ideal way for game hack development moving onwards and whilst it may be a relatively easy method to code, with one model being translatable and able to inference multiple games without adaption, it's still restricted by the same disadvantage’s humans have, information. Whilst they may achieve greater accuracies as humans, through the limited visual information given, these hacks are obsolete when facing memory-based cheats or professional players at competitive levels of plays. This hack vector however, is still certainly an effective method and yet another unique spin on the traditional methods game hacks have been produced and to an extent pay homage to the earlier days of hack development approaching solutions similar to color aimbots.

Addendum: As I don't condone cheating and did this from a purely research standpoint, the source code for the hack or any related code pertaining to this project is closed-source and will not be available upon request to protect the integrity of Counter-Strike: Global Offensive as well as other games from the rapid increase of cheaters using artificial intelligence-based hacks.